About me

I am a fourth-year Ph.D. student in Computer Science and Engineering at Notre Dame, where I work in the DM2 Lab advised by Prof. Meng Jiang.

My research focuses on building reliable LLM agents for real-world decision-making tasks that require multi-step reasoning over diverse sources of information. I work at the intersection of retrieval-augmented generation (RAG), tool-augmented language models (TALMs), and learning-based methods to improve agent behavior in imperfect and evolving environments.

More specifically, I study two themes: designing effective tool-use strategies and reliable task-specific tools for realistic data workflows, and improving agent frameworks with learning signals such as verifiability checks, tool outcomes, and consistency constraints. My goal is to make LLM agents more correct, robust, and efficient in dynamic settings.

Outside research, I am very much a cat person, which means I am easily distracted by cats on the internet and in real life 🐈. I am also a proud dad of two amazing cats: Mam (Fish Sauce) and Muoi Tieu (Pepper Salt).

⭐ Recent News

| 📄 Apr, 2026 | OpenTools preprint is now on arXiv. |

| 📄 Apr, 2026 | Disparities in ridesharing platforms (1st-author) accepted at CSCW 2026. |

| 🎉 Mar, 2026 | I will join Oracle as Applied Scientist Intern this Summer working with Dr. Avi Sil. See you, Redwood City! |

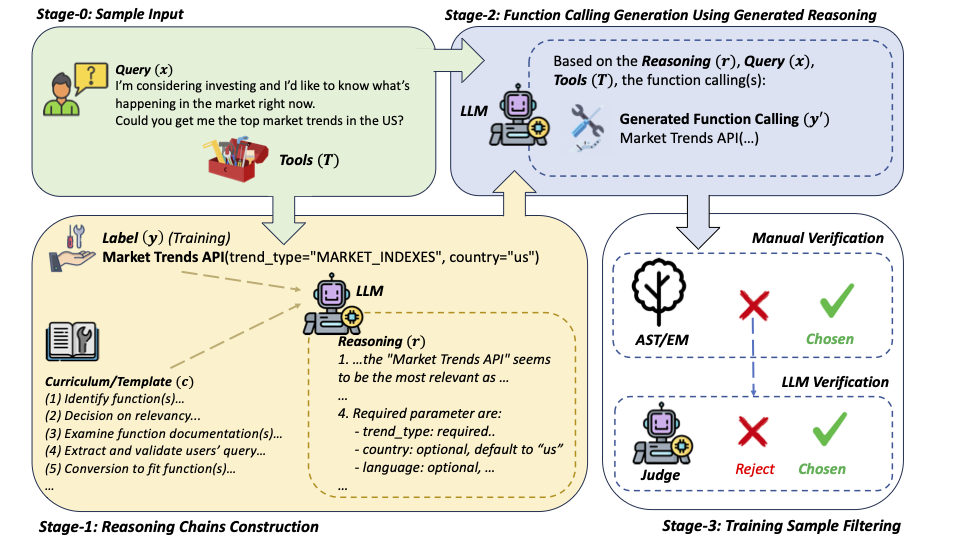

| 📄 Aug, 2025 | LLM Function Calling with Templates (1st-author) (Work done during Amazon Internship) accepted at EMNLP 2025 Main. |

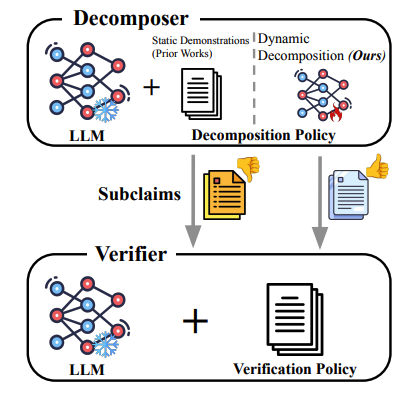

| 📄 May, 2025 | DYDECOMP paper accepted at ACL 2025 Main. |

| 🎉 Aug, 2024 | Happy to announce that I will join Amazon as Applied Scientist Intern starting in September! See everyone in Palo Alto soon! |

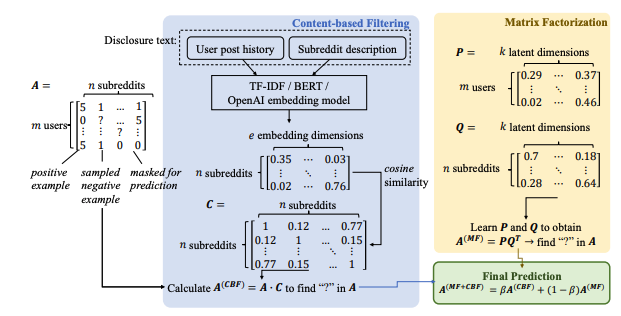

| 📄 May, 2023 | Community Recommendation Using Mental Helath Discourse Paper accepted at CODI 2023 - ACL 2023 |

| 🎓 Aug, 2022 | Joined University of Notre Dame as a PhD student in Computer Science & Engineering under the supervision of Prof. Meng Jiang. Go Irish ☘️. |

| 🎓 Dec, 2021 | Graduated with 4.0 GPA (Summa Cum Laude) with double degrees in Computer Science and Mathematics from Texas Christian University. |

📃 Publications

📧 Contact

I’m best reached via email. I’m always open to interesting conversations and collaboration.

- Email: hdang [at] nd [dot] edu

- Office: 355 Fitzpatrick Hall of Engineering

- Location: University of Notre Dame, Notre Dame, IN 46565